Now hiring!

AI security tools are becoming common in smart contract development, but meaningful comparisons remain rare. Most tools do not publish evaluation data, and benchmarks often measure only part of what matters in practice.

To address this, we ran AuditAgent across EVMBench, a standardized benchmark for AI vulnerability detection built by OpenAI and Paradigm, and published the full results. This article summarizes three related areas: benchmark performance, the limits of recall-only evaluation, and the constraints shaping AI security tooling in 2026.

EVMBench covers 40 real audit repositories and 120 high-severity vulnerabilities. We ran all 40. AuditAgent achieved 67% post-validation recall, compared to 47% for Claude Opus 4.6 and 38% for GPT-5.2.

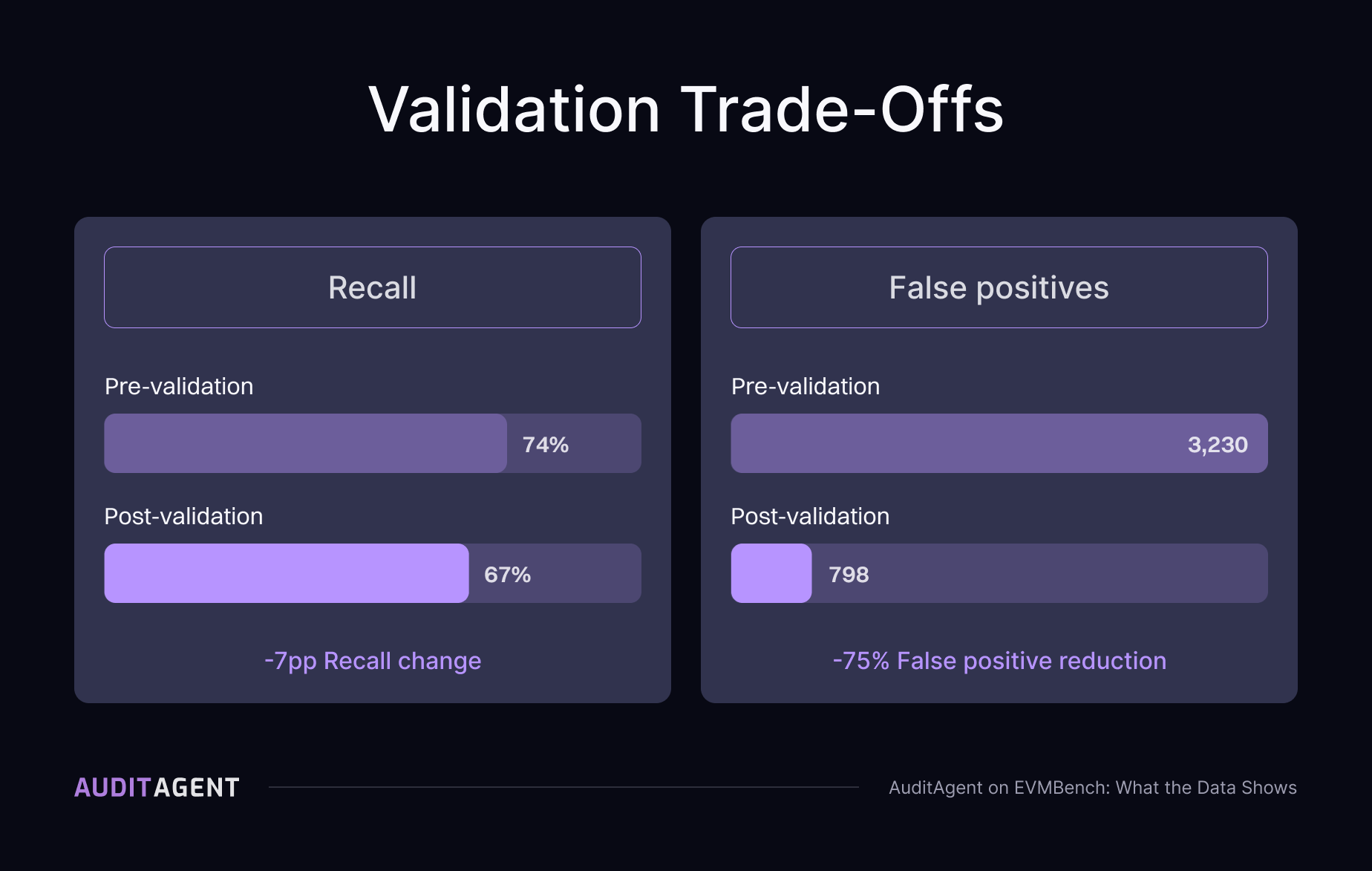

All 40 repositories were run sequentially without selection after the fact. AuditAgent includes a validation phase that filters findings before they are surfaced, so the 67% recall figure reflects post-validation output, which makes it conservative. It also means the findings auditors receive are higher-confidence, not a long queue of candidates to re-triage.

Our internal methodology also tracks partial matches alongside exact ones, separate from EVMBench's binary grading, which gives a more detailed picture of detection quality across severity levels.

Read the full benchmark results and methodology here.

Recall measures whether a system finds known vulnerabilities. It does not measure how much noise comes with them, and in 2025 that gap became the real bottleneck for teams using AI security tooling in production. EVMBench is built around a curated subset of known vulnerabilities, not the complete set of findings from each engagement, so calculating a false positive rate from it is structurally impossible. Benchmarks that measure only coverage systematically understate the cost of using a tool in practice.

Our validation layer filters findings before they are surfaced. We also open-sourced our evaluation algorithm to support SCABench, a community-driven benchmark designed to enable both recall and precision evaluation on real audit data, where complete finding sets per engagement are included rather than a curated subset.

Learn more about recall, false positives, and evaluation limits on the AuditAgent website.

By 2026, detection capability is no longer the main constraint. The focus has shifted to latency, cost per analysis, and ecosystem coverage. The constraints that shaped earlier conversations, whether AI could find real vulnerabilities in 2024 and whether the output was usable in 2025, have mostly been addressed.

Most AI security tooling was built for Solidity and EVM-compatible environments, while Move, Rust, and Cairo represent a growing share of deployed value. A tool that fits scheduled audits but not pre-deployment workflows tends to get deprioritized as development cycles get faster.

Read the full piece on latency, cost, and ecosystem coverage on AuditAgent.

One practical application is running analysis before a manual audit begins. AuditAgent produces structured findings ahead of auditor engagement, reducing time spent on common vulnerability patterns and allowing auditors to focus on logic and cross-contract interactions that require human judgment. Manual audits remain the standard for high-stakes deployments, but the starting point changes.